Hunting Compressed Kill Chains

How we can use reasoning and behavior to outsmart faster attacks

Tailored access is going mainstream as AI-assisted tooling compresses entire kill chains into minutes. We’re not seeing a plethora of new threat vectors, instead broader iterations of proven ones executed at machine speed. Shorter, faster, iterative workflows are the adversary’s new path into our environments, and our traditional detection timelines aren’t built for it.

We used to catch intrusions because they took time. Attackers needed hours or days (remember when we actually tracked breakout time as a meaningful metric?) to move laterally, enumerate targets, and find the good stuff. That gave us gaps: strange logins we could triage, lateral movement that triggered alerts, enough time to respond before the damage was done.

Now? Recon, access, exfiltration... the whole sequence happens faster than your alert queue refreshes. “Alert and respond” becomes “reconstruct what already happened.” You’re not catching intrusions. You’re doing forensics on yesterday’s breach.

This week’s write up came out of a conversation I had last week. In a world with vibe hacking, even if your stack works fine and controls are good; the entire kill chain will be executed before the your first alert fires. Here’s a practical approach to identifying these compressed sequences using tools you already have.

What this looks like

The phases haven’t changed. It’s still reconnaissance, initial access, lateral movement, data access, exfiltration, impact. Same playbook, just faster and more agentic rules.

What’s different is tempo. AI-assisted intrusions collapse kill chains into tight, near-continuous flows. Recon is automated. Credential use is scripted. Lateral movement and exfiltration happen in one push with no pauses for decision-making. If first credential use to first exfiltration is 5 minutes, odds are alert workflows are already too slow.

Organizations need to detect the compression itself, not just the individual events.

Telemetry requirements (you can’t hunt what you can’t see):

Authentication logs with timestamps and source context

Process creation telemetry with command-line visibility

File access or object read logs for critical systems and SaaS apps

Proxy or egress logs with URLs, byte counts, destinations

Cloud audit logs for API and IAM events

If you’re missing any of these, odds are there’s a blindspot, and if you have the logs but no correlation capability, you’re already playing at a disadvantage. I’ve seen environments with all the right data sources but they’re in separate silos with no way to link events by user or host.

Three patterns that matter

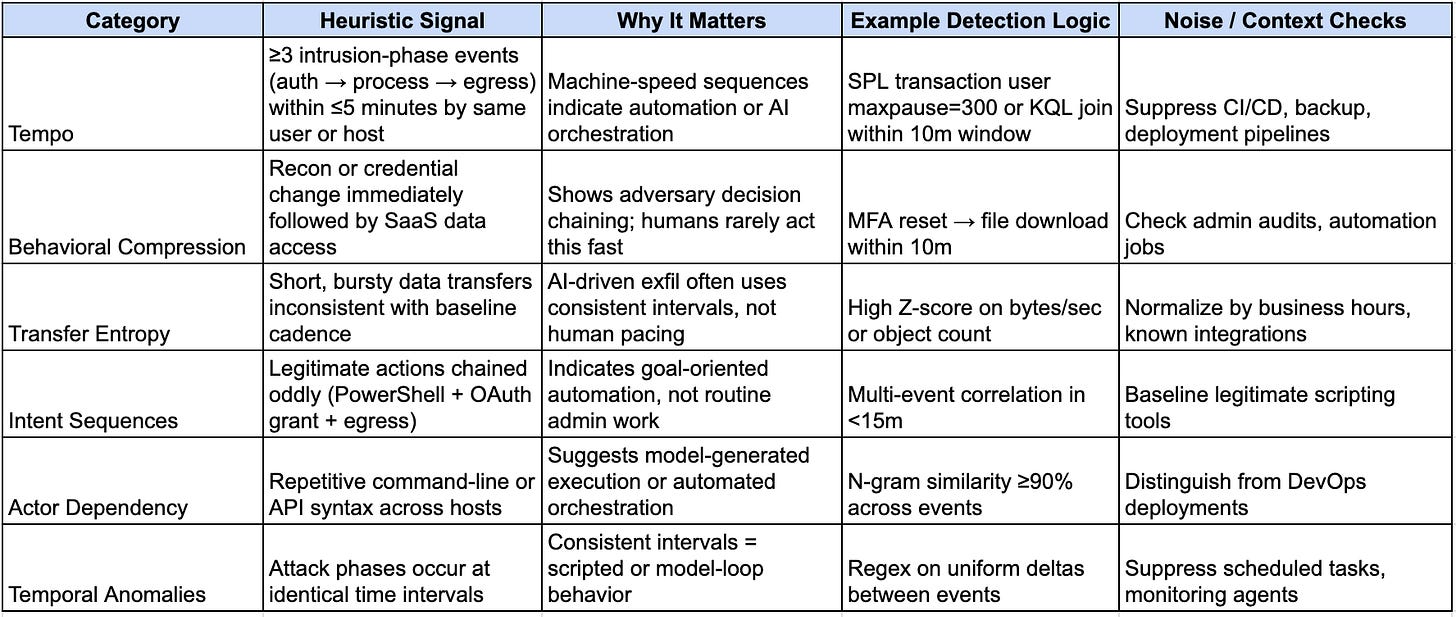

TLDR here’s a three behavioral grouping that should indicate whether or not your adversary is using automation (or AI) to target you.

Compressed sequences. Intrusion-phase events (auth, process, egress) that happen too fast to be human-operated. Humans hesitate. They typo, backtrack, pause between decisions. Machines don’t. Authentication, process execution, and egress within 5 minutes following logical attack progression? Not human.

High-entropy transfers. Short bursts of data movement with unnatural consistency. Humans don’t download 40 files in 90 seconds with perfectly even spacing. AI-driven exfiltration is metronomic and patterned. Organic behavior isn’t.

Decision-point anomalies. Actions compressed into timeframes inconsistent with human workflows. MFA reset immediately followed by SaaS file access. New API key created and used within minutes. When these happen this fast, it’s either automation or someone with zero hesitation executing a predetermined plan.

What this looks like in your data:

None of this relies on signatures or IOCs; it’s all about tempo. How fast, how consistent, how deliberate. If something feels too clean or too synchronized, dig deeper.

Why tempo matters

Let’s consider how we think about detections (as sources of signal) for humans to deduce intent.

Traditional detection: “Is this malicious?”

Tempo-based detection: “Is this moving at a pace inconsistent with human decision-making?”

When adversaries compress the entire kill chain into 5 minutes, IOC-based detections become postmortem documentation. But if you surface the compression itself (the unnatural cadence no human produces), you catch them while they’re still in your environment.

These detections also degrade gracefully. If attackers know you’re hunting 5-minute sequences and deliberately slow to 10, you’ve still won. You forced them to move at a pace where your other detections have time to fire. You broke their tempo.

Queries

In an effort to get the ball rolling, consider mapping out behavioral detections to the table in the previous section. Here are two hunting examples written in SPL (for all my Splunk users out there). Note that the field names are generic. Adjust for your environment.

Compressed sequence within 5 minutes

User-scoped transactions packing authentication, process execution, and egress into 5 minutes or less.

Query

index=auth OR index=process OR index=proxy earliest=-10m

| eval event_type=case(

index==”auth”, “auth”,

index==”process”, “proc”,

index==”proxy”, “egress”

)

| table _time host user src_ip process command event_type url bytes

| transaction user maxpause=300 startswith=(event_type=”auth”) endswith=(event_type=”egress”)

| where eventcount > 3 AND duration <= 300

| eval sequence_score = mvcount(mvsort(mvappend(

mvfilter(match(process, “(?i)powershell|cmd|wscript”)),

mvfilter(match(url, “/download|/export”))

)))

| where sequence_score >= 2

| table _time user host duration eventcount sequence_score

Reasoning: This should catch anyone who authenticates, executes processes (especially PowerShell/cmd), and generates egress traffic within 5 minutes with at least two suspicious indicators.

Legitimate users don’t authenticate and immediately execute scripts that download data. When this fires, it’s either automation using accounts you should whitelist, or an attacker.

Tune by whitelisting automation accounts, adjusting maxpause based on your environment’s normal tempo, modifying the sequence_score regex for your suspicious processes. Add source IP reputation or geo checks if needed.

MFA reset followed by file access

Decision-point compression. MFA reset immediately followed by file downloads.

Query

(index=o365 sourcetype=”o365:management:activity” Workload=”AzureActiveDirectory”

(Operation=”Reset user password.” OR Operation=”User registered security info” OR Operation=”Change user password.”))

OR

(index=o365 sourcetype=”o365:management:activity” Workload=”OneDrive”

(Operation=”FileDownloaded” OR Operation=”FileAccessed” OR Operation=”FileSyncDownloadedFull”))

| eval event_category=case(

Workload=”AzureActiveDirectory”, “mfa_reset”,

Workload=”OneDrive”, “file_access”,

1=1, “unknown”

)

| stats min(_time) as first_time, max(_time) as last_time, values(Operation) as operations,

values(OfficeObjectId) as files, dc(event_category) as category_count by UserId

| where category_count >= 2

| eval duration=last_time - first_time

| where duration <= 600

| convert ctime(first_time) ctime(last_time)

| table first_time UserId duration operations files

Reasoning: Legitimate credential resets take time. Users complete the reset, reauthenticate across services, navigate to resources. 10 minutes from credential change to file access is suspicious.

Adjust the 600-second threshold based on your users. Baseline help desk and admin accounts that reset credentials frequently. Weight file sensitivity (access to sensitive repos matters more than general shares). Enrich with device compliance or known device status.

Tuning

The best way to tune (aside from testing against your data) is to simulate these kinds of kill chains. It’s worth noting that compressed sequences generate noise if you skip baseline work. Take a real incident. Collapse the timeline into 5-10 minutes preserving event order. Replay it in a test index. Run your detection, see what fires, and measure coverage before and after tuning.

One replay teaches more than ten tabletop exercises. You’ll find gaps you’d never catch in theory. Missing command-line logging on specific hosts, or proxy logs without response codes or byte counts, which breaks exfiltration detection entirely.

Whitelist automation accounts and scheduled jobs. Enrich with asset metadata (dev, CI/CD, test environments). Build baselines by role and time of day. Weight anomalies touching privileged resources or originating from unusual locations.

I also wouldn’t suggest letting perfect be the enemy of good. Therefore don’t aim for zero false positives, it’s impossible. Aim for enough context per alert that someone with limited understanding of the detection can make a decision in under 5 minutes. If they’re spending 15 minutes gathering context per alert, your detection is broken regardless of accuracy.

Metrics that actually matter

Am I proposing MTTD be 60 seconds or less? No. But we should start to build guardrails and a framework that propels us forward. If your time-to-detect is over 10 minutes from first event to alert, you’re already behind on compressed sequences.

Track coverage delta, meaning what percentage of these fast-moving attacks you’re actually catching after each round of tuning. Watch your false positive rate per 1,000 hosts. If you’re pushing more than 5-10 false positives per day per thousand hosts, your analysts will burn out and start ignoring alerts entirely.

And measure iteration velocity. How long does it take you to go from “I have a hypothesis” to “I have a deployed, tested rule”? That’s the metric that actually matters for staying ahead of this problem. If it takes you 3 weeks to deploy a new detection, you’re building for last month’s threat, not today’s.

Wrapping up

We’ve covered a lot; and much of this is to start discussion, not to solve the AI kill chain in one Substack post. Start small. Pick one of these patterns and build it out, test it, tune it and tweak it. Measure whether it’s actually catching what you think it should catch (if it doesn’t, please let me know). Then do it again next month with another pattern.

The goal of these defensive techniques isn’t perfect adversary attribution, but rather interruption. If you can spot the sequence before the exfiltration completes, you’ve won that round. Machine-speed attacks aren’t unstoppable. They’re just fast. We can match that speed if we stop overthinking and start iterating.

Stay secure and stay curious, my friends,

Damien